The Legal AI category has spent the last several weeks in a narrative pile-up.

A pure-play AI Legal vendor closed funding at an $11 billion valuation. A direct competitor closed at $5.5 billion. Within days, an open-source alternative dropped on GitHub claiming feature parity with both. Every Legal tech newsletter, podcast, and analyst note has been dissecting what it means.

Most of the analysis has missed the point.

The story being told is about who wins the Legal AI race. Who has the best foundation model. The slickest interface. The fastest growth chart. The richest funding round. The most ambitious open-source rebuttal.

It is a compelling story. It is also the wrong race.

Front-end AI is the easy part

Building a chat interface on top of a foundation model has become a fast project. The recent open-source release proves it. A working alternative to two of the most-funded companies in the category, available as free code anyone can run.

That is not a knock on any of those companies. They have done real work. But the speed at which their core capability can be replicated tells you something important about where the defensible value in Legal AI actually lives.

It does not live in the interface.

It does not live in the foundation model.

It lives in the system of record underneath the work.

What that system of record actually is

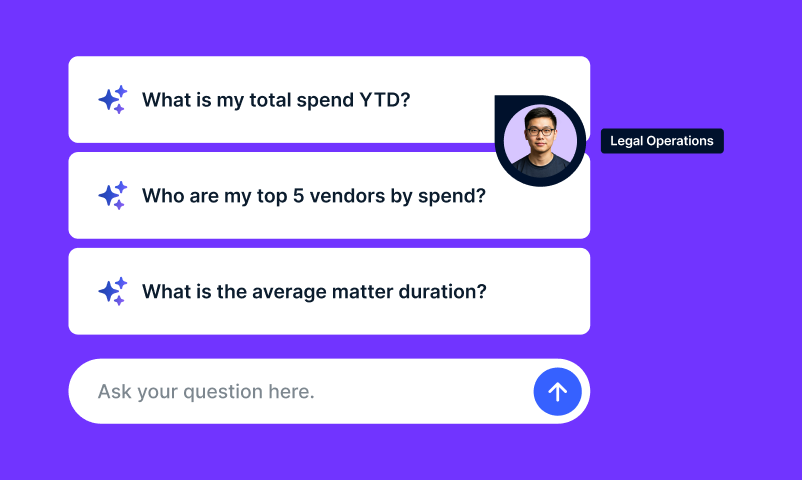

Legal operations does not happen in a chat window. It happens across matter management, spend management, vendor relationships, compliance frameworks, document repositories, billing systems, and the integrations that connect all of them to the rest of an enterprise.

Onit has spent over a decade building that infrastructure. Today it serves more than 3,000 corporate Legal teams and connects more than 23,000+ law firms. It is the operational backbone that Legal departments at the world’s largest companies actually run on.

That is the layer where AI for Legal becomes durable. A chat interface can be replaced in a week. A system of record cannot be replaced in a year, and the data, workflows, and trust accumulated inside it cannot be replicated at any speed.

What we did in twelve hours

When the open-source Legal AI release dropped, our AI team was already deep into building Olava, Onit’s own small language model, and the broader AI capability layer of our platform. The release was not a surprise. It was a market signal that the front-end of Legal AI had reached commodity status, exactly as we had been building toward.

So we ran a test.

Within twelve hours of the release, our team integrated it with Olava and put it in front of our own Legal department to evaluate against the work already underway on our platform. Real lawyers. Real workflows. Real comparison.

Twelve hours.

What that test confirmed was what we already believed. The interface is replicable. The foundation models are commoditizing. The defensible value of Legal AI lives in what surrounds it. The data, the integrations, the security posture, the network of Legal teams and law firms doing the work.

The integration itself was not twelve hours of work. The years of platform investment that made twelve hours possible, secure deployment pipelines, model evaluation infrastructure, data architecture, integration scaffolding, was the harder thing. The twelve-hour test is a proof point about the platform, not just the team.

What we are committing to

Olava is real. It is in active development. It will be in production with our own Legal department in the coming weeks, and in customer pilots shortly after.

That is one piece of a broader commitment. Every new AI capability that emerges in this category, whether from a well-funded vendor, an open-source contributor, or anywhere else, can be evaluated, integrated, and deployed inside the platform our customers already trust, on the security posture they already know.

We are not going to chase the AI hype cycle. We are going to do the harder work of putting durable AI capability into the system of record where Legal actually happens.

Why this matters for the category

The companies winning the AI hype cycle today are racing on a dimension where speed compounds quickly and moats erode just as fast. Their models will be matched. Their interfaces will be cloned. Their funding rounds will not protect them from the underlying truth that everything they have built can be rebuilt in a fraction of the time it took to build it the first time.

The companies that will define what Legal AI actually becomes, five years from now, ten years from now, are the ones building at the infrastructure layer. The system of record. The system of process. The trust posture. The network of Legal teams and law firms doing the work.

Onit is one of those companies.

What comes next

The next 30 days will bring more. A behind-the-scenes look at our AI work, the workflows Olava is being designed to support inside our own Legal department, customers joining the early access program, a live broadcast with our AI team, and an honest accounting of what is working and what is not.

The bigger story will unfold over the next several quarters. The Legal AI race is not going to be won where the market is currently watching. It will be won underneath, in the infrastructure that makes Legal work actually run.

Onit is building there.